At this year’s AWS Summit 2022 in Berlin I had the chance to watch an inspiring talk by one of the software engineers who has undoubtedly had a lasting influence in the software engineering community - Gregor Hohpe. With his book “Enterprise Integration patterns” from 2003, he and Bobby Woolf gave and today, still give orientation and conceptual solutions to engineers about recurring questions of how to connect systems for data exchange, while meeting the desired requirements.

That talk was co-authored and presented by an AWS solution architect, Luis Morales, who helped bring these integration patterns and concepts from 2003 into the present, using capabilities provided by modern cloud services.

Admittedly, applying well known patterns with recent technology doesn’t sound too exciting. We also do this with much older ones, such as the Gang of Four (GOF - 1994) patterns, e.g. the Factory or Strategy pattern.

Now the real deal and mind blowing aspect of that talk for me was how they built an architecture and its implementation, in which infrastructure artifacts and concerns are so highly abstracted behind the business and integration implementations, that the infrastructure building blocks almost seem to fade away. Their goal seems to be that infrastructure components are no longer subject to dedicated, separated provisioning or maintenance. Instead they are part of an application that is expressed in its own domain specific language (DSL), combining all logic and hardware related concerns into one code base, plus utilizing a single 3rd generation programming language!

We will look into how they approached that in a bit, as well as how that is connected to “Enterprise Integration Patterns” from 2003. We’ll also ponder what questions and possible implications this might bring to the notion of the current state of cloud native software engineering and how it might soon evolve.

How the advent of serverless puzzled me, questioning well believed engineering practices

My thoughts and questions came from the observation that in the cloud native space people have started breaking down microservices into even smaller pieces, using FaaS (function as a service) services (e.g. AWS Lambda), API gateways, step functions, driven by lambda functions and so on. That appeared to me like an explosion of the number of deployment units that now also need to be managed and maintained. All that clutter for one microservice? Well, I thought it’s supposed to be micro already! So we are doing “nanoservices” now! And what does that imply? For example it means more complexity when different FaaS units suddenly access the same data in a shared database.

It boils down to the question of how to organize many fine grained infrastructure units to compose business applications that are still comprehensible and maintainable.

Or more generally put: How does cloud nativity change the way we think about software architecture?

I refer to cloud nativity in the sense of taking full advantage of the vast landscape of cloud services to compose enterprise software from it, together with individually developed services.

Let’s have a look now, how Gregor’s and Luis’ talk are a part of the puzzle.

Key concepts presented by Gregor and Luis

One aspect their presentation has shown certainly is that we are on a path of transformation from classical data center operations to cloud native operations of software. While many companies and teams have already successfully moved into a public cloud, most of them probably still follow the classical understanding of datacenter operations to deliver software. That includes basically these actions:

Provisioning infrastructure

Deployment of applications to that infrastructure

- and ensure all applications are correctly integrated (e.g. addresses of databases, remote endpoints, and so on correctly configured) - Composition

- and ensure all applications are correctly configured

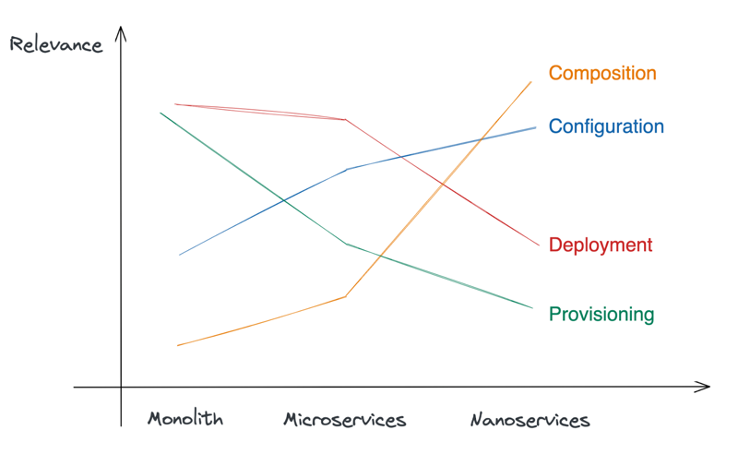

Given the fact, as mentioned earlier, that there is the tendency and incentive to compose modern cloud applications more and more of many single cloud services (Lambda, SQS, AWS Step Functions, Streaming services with Kinesis, to name a few from the AWS ecosystem), it turns out that the aspect of Composition suddenly requires more attention, simply because there are now many more parts to be integrated.

Figure 1: The Relevance shifts of different operation aspects over architectural patterns

So, Gregor’s and Luis’ approach is, among others, to free deployable applications from the composition aspect. Instead, they move it into the Infrastructure as Code (IaC) layer to be lucidly handled on an abstraction layer, where all the cloud services (now the custom ones included), are uniquely composed. In their talk they state:

“Serverless automation isn’t about provisioning but about composition and configuration”

For example, in microservice architectures, services usually contain the knowledge about their destination integrations and configurations. Their goal is to limit the scope of services just to the actual business tasks, without the need to deal with integrations inside them.

How? First of all: by using a “decent programming language” of course:

“You do not need declarative language to define infrastructure declaratively - you can use a decent programming language”

By “defining infrastructure” in the scope of their talk, they describe whole business processes or services on the IaC level, using Typescript and AWS CDK. They achieve it with a quality where that IaC code is quite expressive in terms of the domain concepts it implements. They built DSLs (Domain specific languages) at different levels and speak these languages to compose the big picture.

One DSL is built upon the Enterprise Integration Patterns. The other DSL is the one the whole application is built by - the business language that is used to name the artifacts of the application.

For the integration patterns - how did they achieve this?

- Either by using an existing cloud service and naming and using it in the role of an integration pattern. For example, AWS SNS is an implementation of a Message Router integration pattern.

- Or combining different cloud integration services into an integration pattern; for example: The Message filter pattern can be composed by using AWS EventBridge as a message bus and its EventPatterns feature as the actual filter implementation.

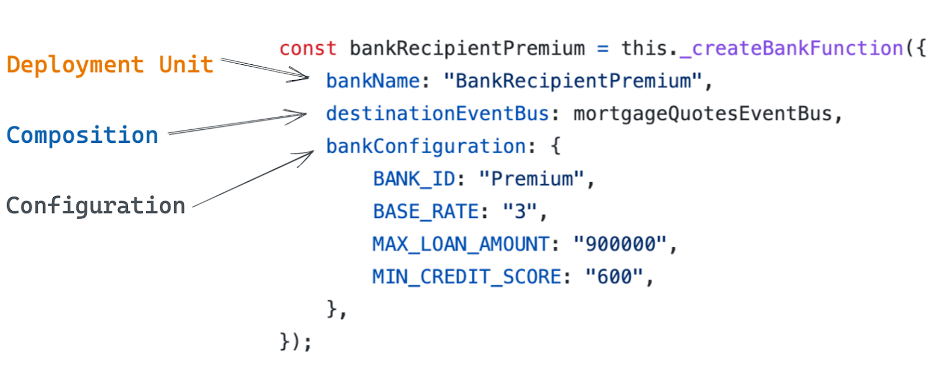

Let’s have a look at a CDK code snippet (IaC) from their example implementation of their loan broker use case in order to show an example of the business DSL:

Listing 1: DSL Example for creating a domain object for a Bank

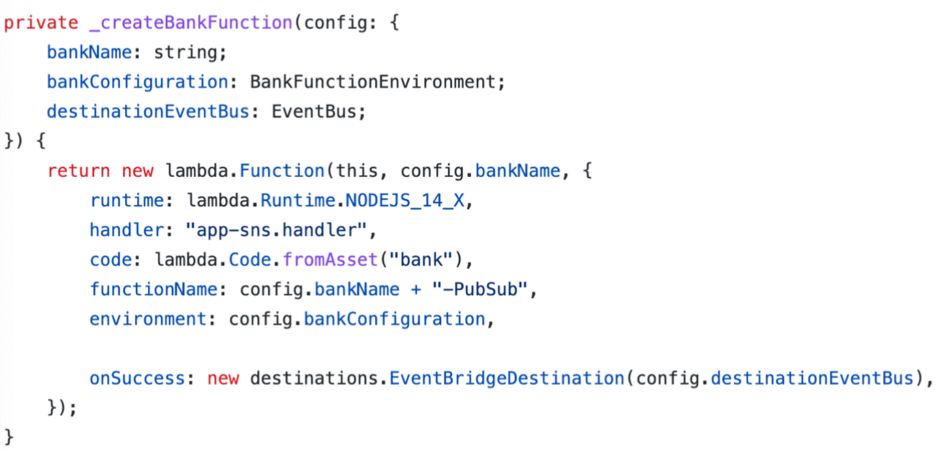

Listing 2: Bank creation - FaaS instantiation

This deployment unit (AWS Lambda function) is used as one building block of a bigger business service (the Loan broker) that is built around it. The object assigned to the constant bankRecipientPremium is a concrete instance of one bank. The function _createBankFunctionis part of the business DSL. Following that pattern, the composition of the whole application uses that DSL.

Another interesting part here is that the composition aspect about where to send results from that function, i.e. the EventBus defined by destinationEventBus, is not leaking into the implementation of that function. That means the implementation code just produces results. It does not contain any information about any recipients or further processing of its results.

Instead, it is ensured at the IaC level only to which event bus the banks’ results are relayed at runtime.

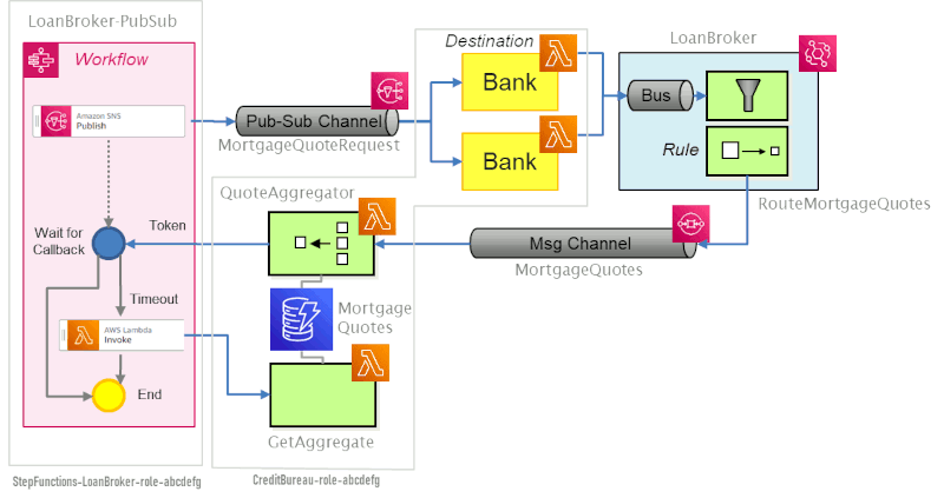

The whole application is implemented with these kinds of building blocks at the infrastructure level. Figure 2 gives an overview of the different artifacts that are composed in the AWS CDK application.

Figure 2: The example apps’ building blocks (Source)

I admit that I found the code in the example project to be neither as object oriented nor as easily revealing the structures expressed in Figure 2 as I expected after watching the talk. While the code uses the domain language to identify the modules, shown in Figure 2, e.g. “LoanBroker”, “MortgageQuotes”, “QuoteAggregator”, the overall structure of how these are interconnected is not as easily visible.

However, I see this as a motivational example and trigger for a possible paradigm shift to further think about how to effectively express the understanding of our business domains at the IaC level in order to get more out of the cloud than plain old provisioning and deployment.

I definitely highly recommend watching the talk to fully grasp the key ideas.

Reflections

As the presentation at the AWS summit by Gregor and Luis shows, we are probably standing at a turning point where in enterprise software development infrastructure might no longer be a concern to be treated and maintained individually and separately from application / business related code.

That raises some questions for me:

- How will code bases be organized?

- How well and effectively can these be tested? (Also see intro to test at IaC level here)

- Will IaC just disappear and its concerns be merged into homogenous codebases that serve a certain business need?

- What does it mean for platform teams? Will they finally cease to exist, or will their spectrum of responsibilities shift once again?

- Will declarative, script based IaC solutions like Terraform with HCL (Hashicorp Configuration Language) soon be obsolete, because they do not fit that new paradigm?

- What are the implications for todays’ humble programmers and

their stakeholders?

I do not have answers to these questions, but would like to share thoughts about some of them and am looking forward to learning about your questions and/or opinions, too.

(1) How will such code bases be organized?

As I said, I do not know. What I know is what I would expect from those code bases; to be able to easily understand the use cases they serve, what the underlying business rules are and where to find them. From Gregor’s and Luis’ example, we can see there are ways to encapsulate groups of (infrastructure, software) components / constructs according to domain concepts and express their relationships. How to do this more lucidly and explicitly might be just a question of good object oriented design?

(6) Implications for todays’ humble programmers

Finally, I want to look at one of the most important questions for me; what are the implications for today’s rapidly growing developer community?

The following perspective is shaped and motivated by the historical context of the software crisis 1968 and impressions and reasoning by Edsger W. Dijkstra, that he put into his essay “The Humble Programmer” from 1972.

As many of the symptoms of the software crisis still seem to stick around today at certain levels, Dijkstra’s perspective might be more recent than one could expect.

Background of the software crisis was, among others:

“The main cause is that improvements in computing power had outpaced the ability of programmers to effectively use those capabilities. (Wiki page Software Crisis)”

I raise this here in the context of the possible paradigm shift that I derived from Gregor’s and Luis’ talk, as it also represents a huge improvement in “computing power”. And while we as programmers are still dealing with partly the same symptoms as our predecessors did, I assume that doing this paradigm shift is not a trivial task.

Let’s take two symptoms - as described in this wiki page - about the software crisis we still constantly deal with:

- Software of low quality

- Code difficult to maintain

In my experience, shared characteristics of low quality and difficult to maintain software, are the absence of tests and domain concepts, derivable from the code and its structural design. We will take this debt with us into the paradigm shift and will need to continue advertising and fighting for making domain concepts explicit in the solutions we code. We already have some well known tool boxes at our fingertips to get there - OOP and DDD. Impulses, given like these by Gregor’s and Luis’ talk, might make the difference to the situation Dijkstra described back in 1972.