The project

A customer has a public website that is often visited by automated bots.

An automated bot, or simply bot, is a software application or script that performs tasks on the internet in an automated and repetitive manner. Bots are designed to perform various functions and are typically programmed to interact with websites, applications, or other online services. They can be created for both legitimate and malicious purposes.

The problem which the customer faces is that some bots or other unidentified web clients consume the resources of its web servers and make them less responsive.

The customer asked for a solution to this problem. The solution should preferably not require any changes to the existing web server infrastructure.

Solution

We chose AWS WAF because it is easy to integrate into the customer’s setup and offers a predefined set of rules managed by AWS (AWS WAF Bot Control rule group), that can detect different categories of bots. In addition, it is possible to use rate-based rules and rules based on specific request attributes

such as HTTP methods, URL paths, HTTP headers or IP address ranges.

Bot categories

List of all bot categories known to AWS WAF Bot Control:

| Category | Description |

|---|---|

| Advertising | Bots that are used for advertising purposes |

| Archiver | Bots that are used for archiving purposes |

| Content Fetcher | Bots that are fetching content on behalf of a user |

| Email Client | Email clients |

| Http Library | HTTP libraries that are used by bots |

| Link Checker | Bots that check for broken links |

| Miscellaneous | Miscellaneous bots |

| Monitoring | Bots that are used for monitoring purposes |

| Scraping Framework | Web scraping frameworks |

| Search Engine | Search engine bots |

| Security | Security-related bots |

| Seo | Bots that are used for search engine optimization |

| Social Media | Bots that are used by social media platforms to provide content summaries |

| AI | Artificial intelligence (AI) bots |

| Automated Browser | Inspects the request’s token for indicators that the client browser might be automated |

| Known Bot Data Center | Inspects for data centers that are typically used by bots |

| Non Browser User Agent | Inspects for user agent strings that don’t seem to be from a web browser |

Preparations

The next steps are performed in a dedicated test environment before the setup is deployed to production.

We start by writing some terraform code that creates an Application Load Balancer (ALB) to attach the WAF. We put the existing web servers into a target group to which the ALB will route its traffic. The ALB will play the role of an additional reverse proxy layer in front of the web servers.

We also create a Route 53 Alias A record pointing to the ALB and a TLS certificate in the AWS Certificate Manager (ACM) for that DNS record, since we only allow HTTPS traffic at the ALB. HTTP traffic is permanently redirected to HTTPS using HTTP Status code 302 (the HTTP Strict-Transport-Security response header is handled by the caddy reverse proxy layer behind the ALB.)

In addition, the ALB and WAF logs are configured to be sent to Datadog.

Adding WAF rules

Filtering specific URLs

First, we add a filtering rule to the WAF (block_webfonts_path) that blocks the URI path /webfonts/2775253ec3a5.css to a resource that no longer exists on the web servers but is still frequently referenced by some misconfigured resources.

Bot Control

Then we add the AWSManagedRulesBotControlRuleSet AWS WAF Bot Control managed rule group to the WAF and select the “common” inspection level. Using this inspection level, bots are detected by the WAF by statically analyzing request data.

The second rule, bot-control-default, will be processed next if the first rule does not match.

This rule will do the following:

Should a request match against a signature of a verified bot, for example Microsoft’s Bing search engine, the request will be labeled by the WAF and the next rule will be processed (rules that only add labels as an action are not terminating rules, which means that the next rule is processed even if there’s a match).

If the rule matches, the following labels are added:

awswaf:managed:aws:bot-control:bot:name:bingbot

awswaf:managed:aws:bot-control:bot:organization:microsoft

awswaf:managed:aws:bot-control:bot:verified

awswaf:managed:aws:bot-control:bot:category:search_engine

Should the WAF not be able to match a request against one of its signatures of verified bots, it will label it

as unverified and block the request. No further WAF rule will be processed.

In this scenario, where our main goal is to prevent the web servers from running out of resources, we start with the most restrictive bot control rule set.

We do not only block unverified bots, we also want to block verified bots. This is done with rule bot-control-block-verified.

This rule looks for requests which are labeled awswaf:managed:aws:bot-control:bot:verified

and blocks them.

Rate-based rule

As the last rule, we add a rate-based rule (rate-based-captcha) with the following condition:

If a specific source IP sends more than 500 requests in 5 minutes, that client will be presented with a CAPTCHA for 5 minutes.

Final rule set

Our final set of rules looks like this:

| Rule name | Rule priority | Purpose |

|---|---|---|

| block_webfonts_path | 0 | Blocks URI path |

| bot-control-default | 1 | Blocks unverified bots, labels verified bots |

| bot-control-block-verified | 2 | Blocks verified bots by matching label |

| rate-based-captcha | 3 | Blocks source IPs that exceed the rate limit |

After installing the ruleset on the test environment, we waited for a few days to collect enough logs to be able to generate some traffic statistics and also to check for false positives (WAF blocks requests that should be allowed) or false negatives (WAF fails to detect harmful traffic).

We found no problems, which allowed us to point the DNS record of the production environment to the production ALB where the WAF is also attached. The DNS change now routes the traffic of the production environment to the WAF.

Datadog WAF Logs Example Views

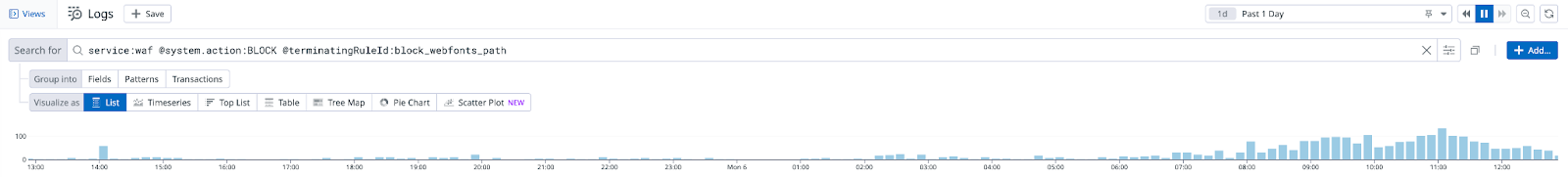

(Datadog showing requests blocked by the WAF using rule block_webfonts_path in the last 24 hours.)

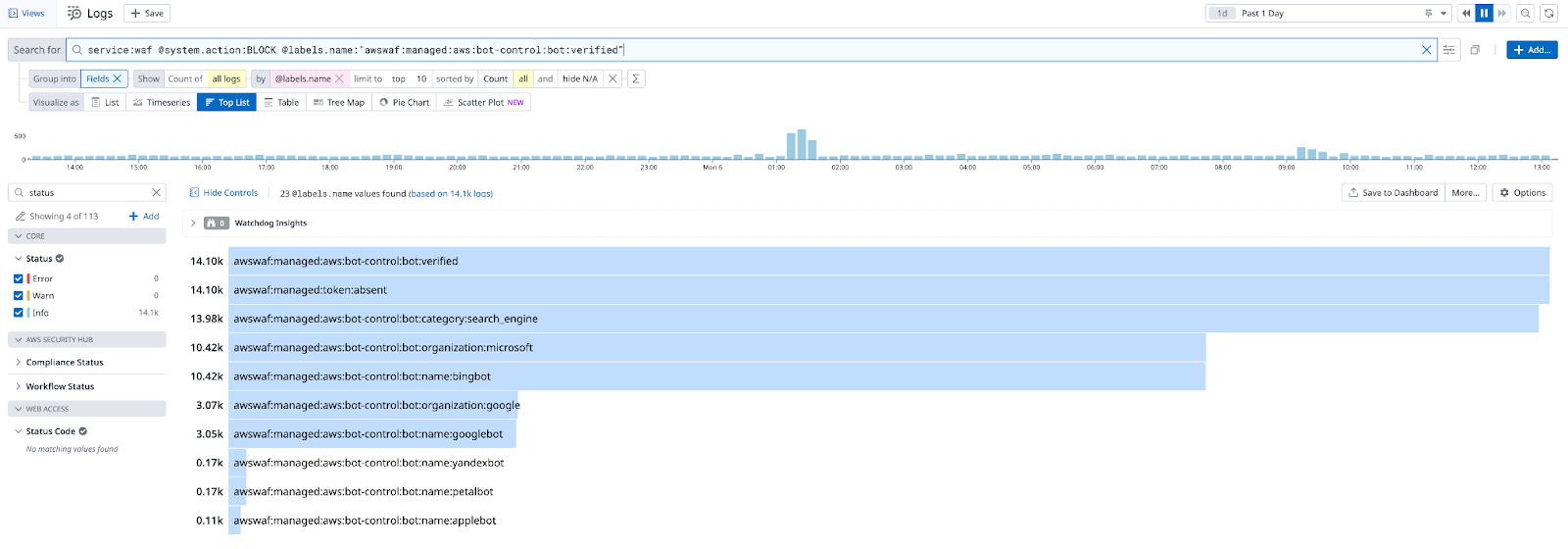

(Datadog statistics for blocked requests from unverified bots for the last 24 hours.)

(Datadog statistics for blocked requests from verified bots for the last 24 hours.)

Conclusion

By using this set of rules, we were able to reduce the amount of unwanted requests reaching the web servers and could so prevent further outages.